-

Why has Siili chosen to focus on AI?

AI has been as an integral part of projects we have been engaged in for several years now. This has enabled us to hone our own particular expertise in design, technology, and data. Our experience tells us that a firm grip on all stages of the process, supported by solid data expertise and a multidisciplinary team, is the only real way to get a holistic and realistic point of view. AI focused activity is therefore a natural extension of our current offerings, and in many ways, a logical consequence of our back catalogue of work. We simply wouldn’t feel credible in such activity without having accumulated the necessary prior experience.

In our view, AI must be trustworthy i.e. useful, compliant, ethical, and always provide transparency to support these qualities. We believe in a multidisciplinary approach – designers, data scientists, developers, and project managers all play a role. We also further develop our skills with discussions and opinion sharing exercises to keep us up to speed with emerging techniques and principles associated with AI methodologies.

At the beginning of an AI development journey with our clients, co-creation and co-validation is done by way of our AI Design Sprint™. This in turn, provides a rapid testbed for ideas. Such techniques enable us to discover and define the crux of business challenges and opportunities, and then use AI as a vehicle to arrive at potential solutions. We then conduct experiments to validate direction, as opposed to immediately building long term solutions, that may not prove fit for purpose further down the road.

Throughout the whole journey, we adhere to the responsible use of technology. We consider the implications for society, the user, and the future, in everything we do. We design for people; and thus, we always consider the true benefits for the users of our client’s services. We aim for viable, feasible, desirable, and ethical outcomes and strive to stay on top of regulations and the evolution of AI. This enables us to effectively guide our clients in their work at any given moment in time, while educating them on potential risks and regulatory changes associated with AI.

-

Why did Siili join an AI research project?

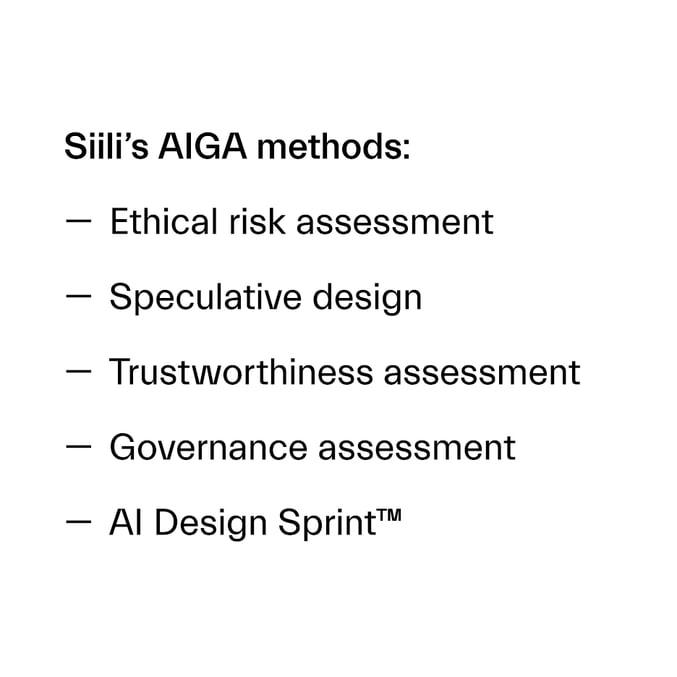

Siili has been working with algorithmic decision support systems in both sensitive and critical contexts for some time now. Through this, we have identified a need for new methods to support the development lifecycle of AI solutions, while also taking ethical aspects into consideration. This is one of the primary reasons on why Siili joined the AI Governance and Auditing (AIGA) research program. The program is led by a consortium of Finnish universities, public institutions, and private companies. The focus of this initiative is centred upon how to identify, design, implement, and use explainable AI solutions that are ethically and legally acceptable and result in sustainable business value.

AIGA’s goals center upon developing solutions for putting responsible AI into practice. This, in effect, means laying out the best-in-class governance mechanisms and auditing principles for algorithmic decision-making with the ultimate goal of building a commercialization roadmap for AI governance and auditing.

The 2-year program commenced in September 2020 and aims to develop capabilities, international business opportunities, publications, international research collaboration and visibility, and social impact on the topic of AI.

Our cooperation in the project has been extremely valuable for us in terms of being able to actually research real client cases and examine important aspects such as transparency. For example, the public sector has distinct demands in this area and the program has been able to provide us with unique insights into this. More info on the AIGA project can be found at https://ai-governance.eu/.

-

How do we keep humans in the loop?

Not only should AI be responsible, but it should also be designed to be human-centric. Ben Shneiderman, an acclaimed proponent of Human-Centered Artificial Intelligence*, talks a great deal about the idea of ensuring the co-existence of both high levels of automation and human control to deliver reliable, trustworthy, and safe applications. It is true that some situations require a high level of machine control for safety reasons. For example, cars’ airbags, corrective steering, and medical field aids. But for many others, human control should not be taken away.

Without dwelling on unfortunate high-profile stories, where high levels of automation going wrong have reached the headlines, let’s consider something as simple as a modern thermostat. These smart devices promise to lower energy consumption and subsequent costs by means of AI algorithms that constantly check electricity prices and choose the optimal moment to heat our houses. High levels of automation might lead these systems to increase the heating at night when energy prices are much lower than during the day, resulting in a house that is too warm for the occupants. If householders are unable to disable such functionality, these devices fall short in terms of providing a comfortable living environment and ultimately erode people’s trust in the abilities of AI. In this case economy took precedence over comfort. We can’t blame AI for this, as it was simply carrying out a preordained task, however it does highlight that AI can’t please all the people, all the time.

A logical debate that this example kindles is how do we maintain an equilibrium with smart technology, if we haven’t considered the human and the societal perspective during design and development? This also begs the question as to what kind of future do we want in 30 years?

Let’s remember for a moment, the most testing times in our lives - death, giving birth and sickness – humans will always want to have another human present, rather than a robot. A need for touch, emotion and presence will never disappear. Repetitive routine tasks, on the other hand, could be taken over by machines. AI can provide a supportive role with recommendations, for example a Doctor could focus on the human and a holistic point of view, psychosocial encounters, and interactions, if AI simply chipped in with potentially helpful recommendations.

A great deal of tasks could be allocated to your technical helper, but where do we draw the line? When do we hand over the controls? What are we ready to give up?

-

So...what now?

AI is playing a huge role in how businesses operate from helping transform data collection and processing to informing transformative business opportunities. While AI and automation hold tremendous value in terms of time and cost savings, we know many organizations have got their fingers burnt with ill-considered AI initiatives in the past. The fact is, that AI done right is good for all kinds of reasons, and it’s never too late to jump on the train.

There are many different stages in the journey to full AI utilization. Whether you are defining your AI strategy, designing your AI business case, assessing or implementing your AI governance, or prototyping an AI solution, we can support you with our AI developed and acquired methods for the entire AI development lifecycle.

Whatever stage of the journey you’re on, we’d love to hear how we can partner with you to reach your desired destination (time and time again). We see AI as the great enabler. Enabling everything from automizing the heavy lifting associated with repetitive tasks, to creating the most outstanding digital customer experience.

So, step aboard, and let’s discover the AI opportunities within your business. Stay tuned for forthcoming posts.

Written by Andrea Vianello, Elsa Tuomi, Simon Robson

References:

*Shneiderman, B. (2022). Human-Centered AI. Oxford University Press.

.png)