I was recently blessed to take part in the AWS Summit in Stockholm in May 2023. I wanted to take a moment to write about the highlights and at the same time practice some generative AI shenanigans, this is that text. I will detail my process in a later post.

Foreword: Partner Summit

AWS Summit

The Keynote: Shaping the Future with Technology

MLOps: Deconstructing Machine Learning

Low-Code-No-Code: The Future of Machine Learning

Generative AI: Powering Innovation

Conclusion

Foreword: Partner Summit

The AWS Partner Summit held on May 10 underscored the pivotal role of partnerships in catalyzing digital transformation and migration to the cloud. With a robust collaboration between AWS and its partners, businesses can accelerate their time-to-market, foster innovation, and bolster security. Key Takeaways:

Synergizing AWS and Partners

Vittorio Sanvito, Director of EMEA Partner Development for AWS highlighted the "force multiplier" effect resulting from the synergistic efforts of AWS and its partners. Tools like the AWS Partner Center 2.0 and the Migration Acceleration Program (MAP) have proven instrumental in expediting businesses' migration to the cloud.

Fostering Regional Growth

Alejandro Alonso Uria, AWS Head of Partner organization, EMEA North, outlined the substantial potential for growth in the Nordics, Baltics, and Belarus region, emphasizing three CEO priorities: digital transformation, inflation management, and talent acquisition. The AWS Solution Factory and marketplace are poised to deliver swift and standardized industry solutions.

Leveraging IoT and Sustainability

Swedspot's strategic use of AWS for its IoT platform exemplifies the transformative power of data analytics in predictive maintenance and sustainable practices. As part of their commitment to sustainability, Swedspot adopted AWS's 'shared security model' to focus on their applications' sustainability aspects.

AWS Summit

The AWS Summit kicked off with a keynote session that emphasized the importance of knowledge sharing and pushing the boundaries of what is possible. Each session was a deep dive into specific areas of technology, providing invaluable insights for our audience. Here are some of my key takeaways:

The Keynote: Shaping the Future with Technology

The keynote speaker highlighted the importance of technology as a problem-solving tool, cost-cutter, and growth driver. Attendees were urged to immerse themselves in the diversity of innovative solutions showcased at the summit, to explore, and to learn. The partnerships that make such events possible were acknowledged, recognizing that collaboration is at the heart of innovation.

The keynote message was clear: Embrace technology, be curious, and keep learning. The conversation doesn't end here, as the summit's sessions offer a wealth of resources for the audience to explore further.

MLOps: Deconstructing Machine Learning

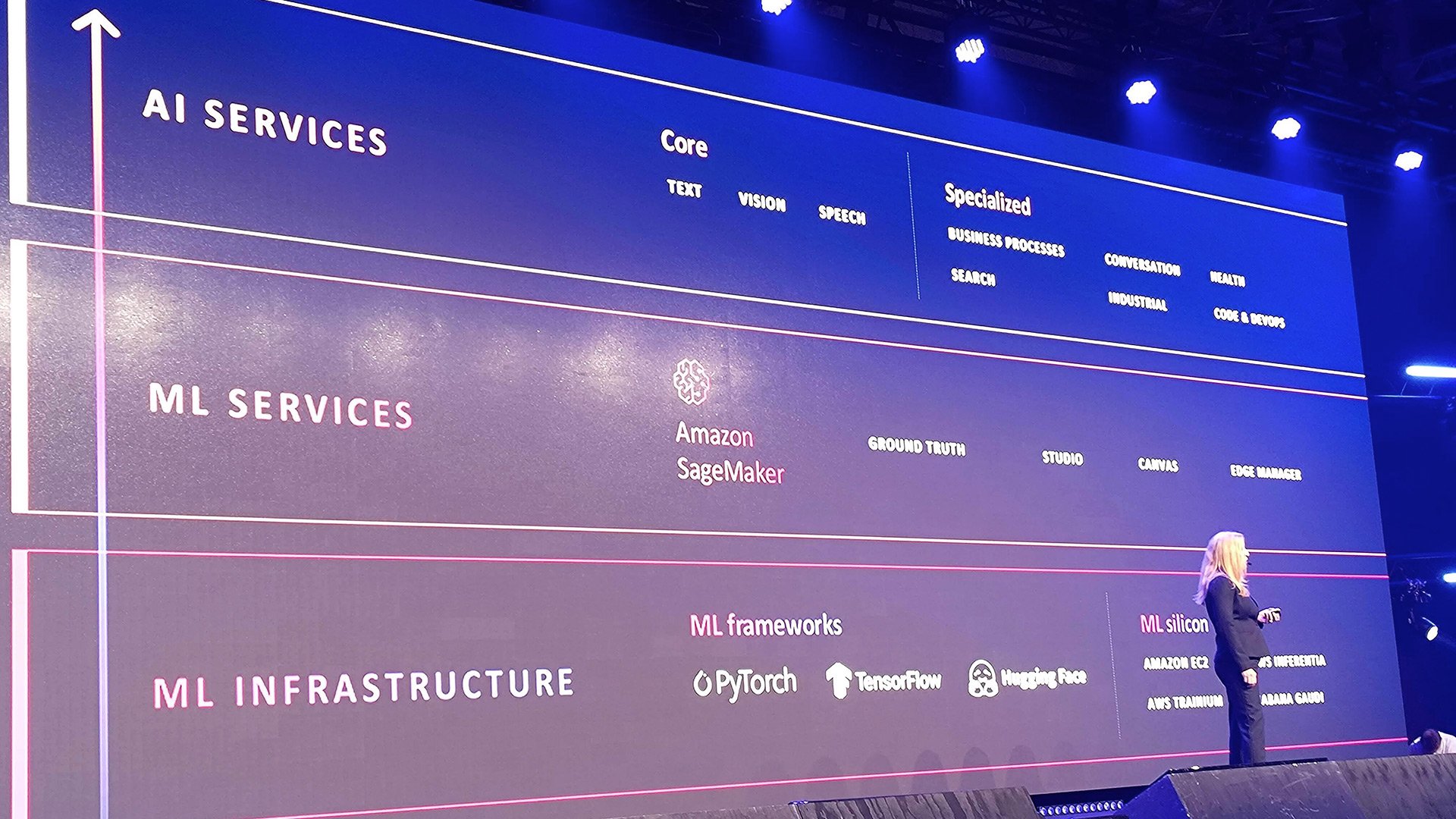

I attended a session on MLOps, which threw light on the challenges faced by clients in the field of machine learning. AWS Solutions Architect Niklas Palm and Qred Data Scientist Hampus Larsson demystified the machine learning stack, dividing it into three layers: the bare-bones, purpose-built infrastructure layer; the machine learning-optimized infrastructure; and the AI services layer.

The talk discussed the machine learning capabilities provided by AWS, including purpose-built containers and machine learning-optimized infrastructure. These tools are designed to simplify and accelerate the machine learning workflow. The session emphasized the need for continuous learning and standard engineering practices, along with the crucial role of feedback in improvement.

There are a lot of options to do machine learning on AWS.

There are a lot of options to do machine learning on AWS.

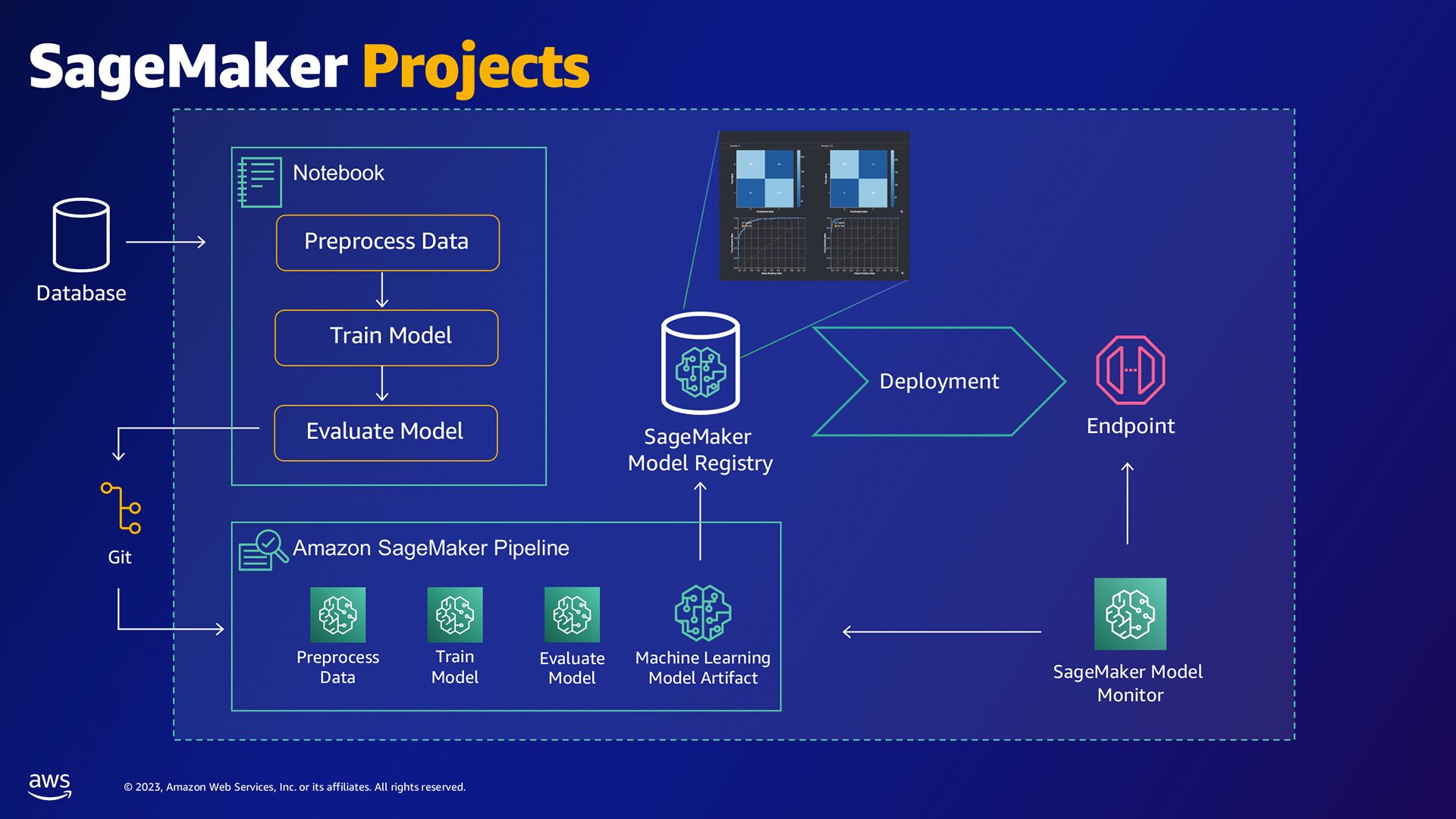

MLOps on AWS enables template notebooks for model standardisation, pipeline shipping for faster model retraining and model registry for governance.

- The speakers emphasized the importance of refactoring code for a production environment and applying standard engineering practices. This is crucial for maintaining the efficiency and reliability of machine learning systems in a production setting.

- Niklas also mentioned EC2 INF1 instances, powered by AWS Inferentia chips, which deliver up to 70% lower cost per inference than current-generation GPU-based instances. TRN1 instances, based on AWS training chips, offer the most cost-effective ML training in the cloud.

- Hampus highlighted the growth of machine learning models, from millions of parameters three years ago to hundreds of billions of parameters today. These larger models, often called foundation models, enable powerful generative AI applications like ChatGPT.

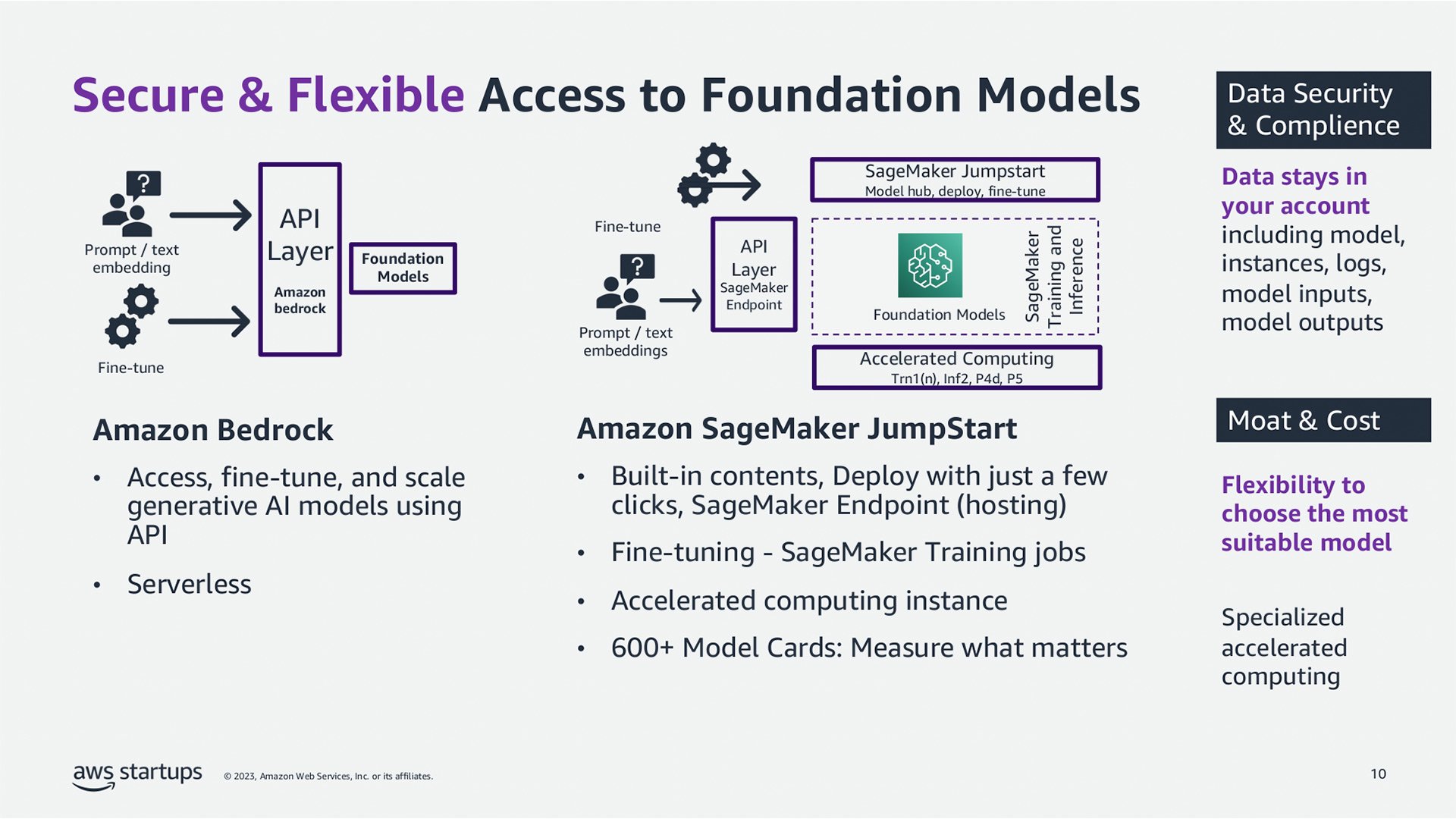

- Niklas announced Amazon Bedrock and Titan models in limited preview. Bedrock makes foundational models like Anthropic and Stability AI accessible via an API, enabling customers to privately customize models with only a couple of pieces of labeled AI.

- The talk ended with a discussion on integrating data sources for quick access and action on data, no matter where it resides. Niklas mentioned the dreaded ETL (extract, transform, load) process, emphasizing the need to connect the dots between different data sources to build a comprehensive view of the business and garner the richest insights.

Low-Code-No-Code: The Future of Machine Learning

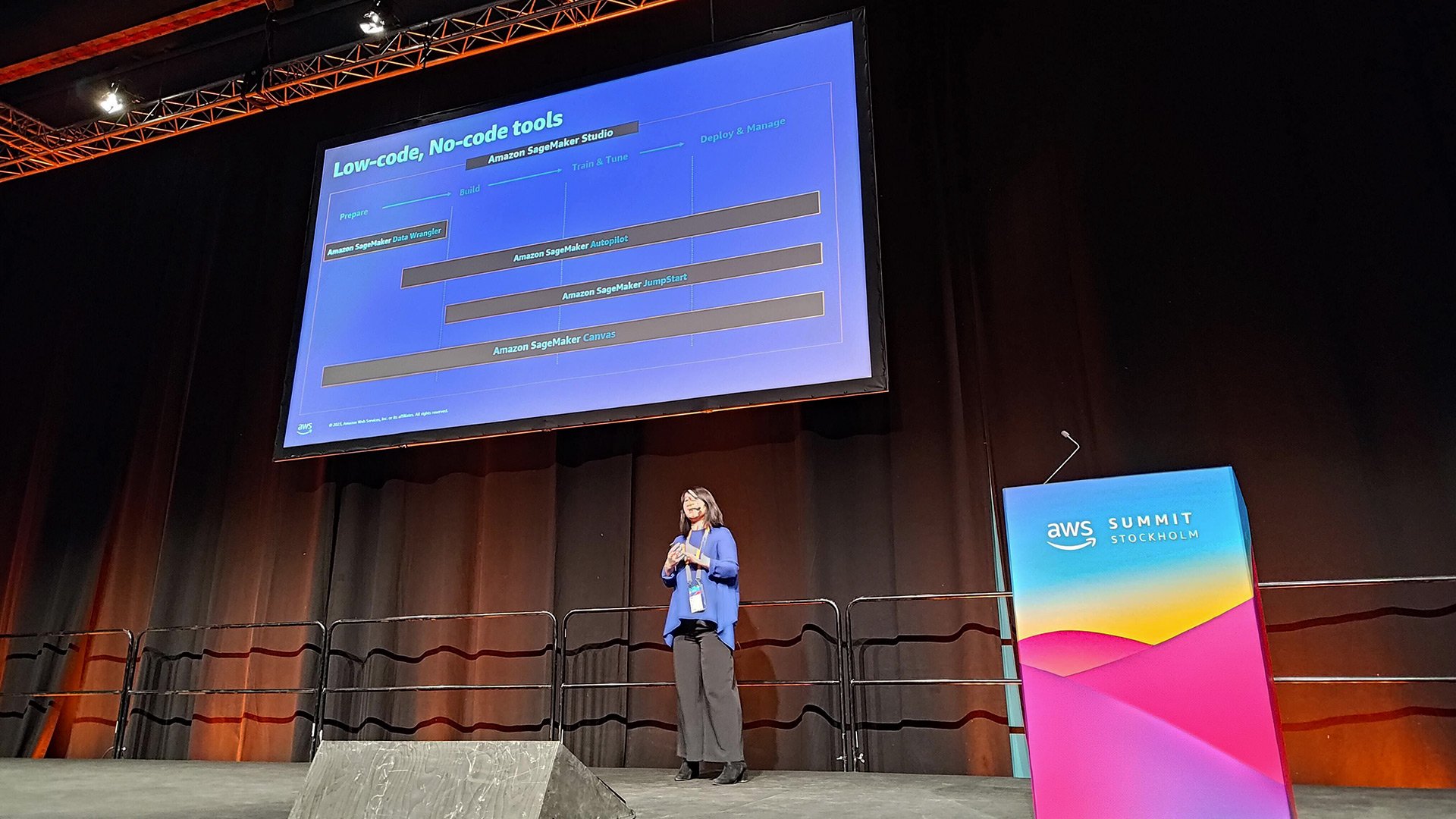

The Low-Code-No-Code session painted a picture of a future where data preparation and model building are simplified, eliminating the bottleneck on machine learning resources. This democratization of machine learning is not only crucial in data science but also in improving machine learning workflows.

Xiaoyu Xing, AWS Solutions Architect, emphasized the need for business analysts or domain experts to harness the power of data, highlighting the importance of interdisciplinary knowledge in extracting value from massive amounts of data.

Xiaoyu Xing gave the presentation on low-code, no-code tooling on AWS.

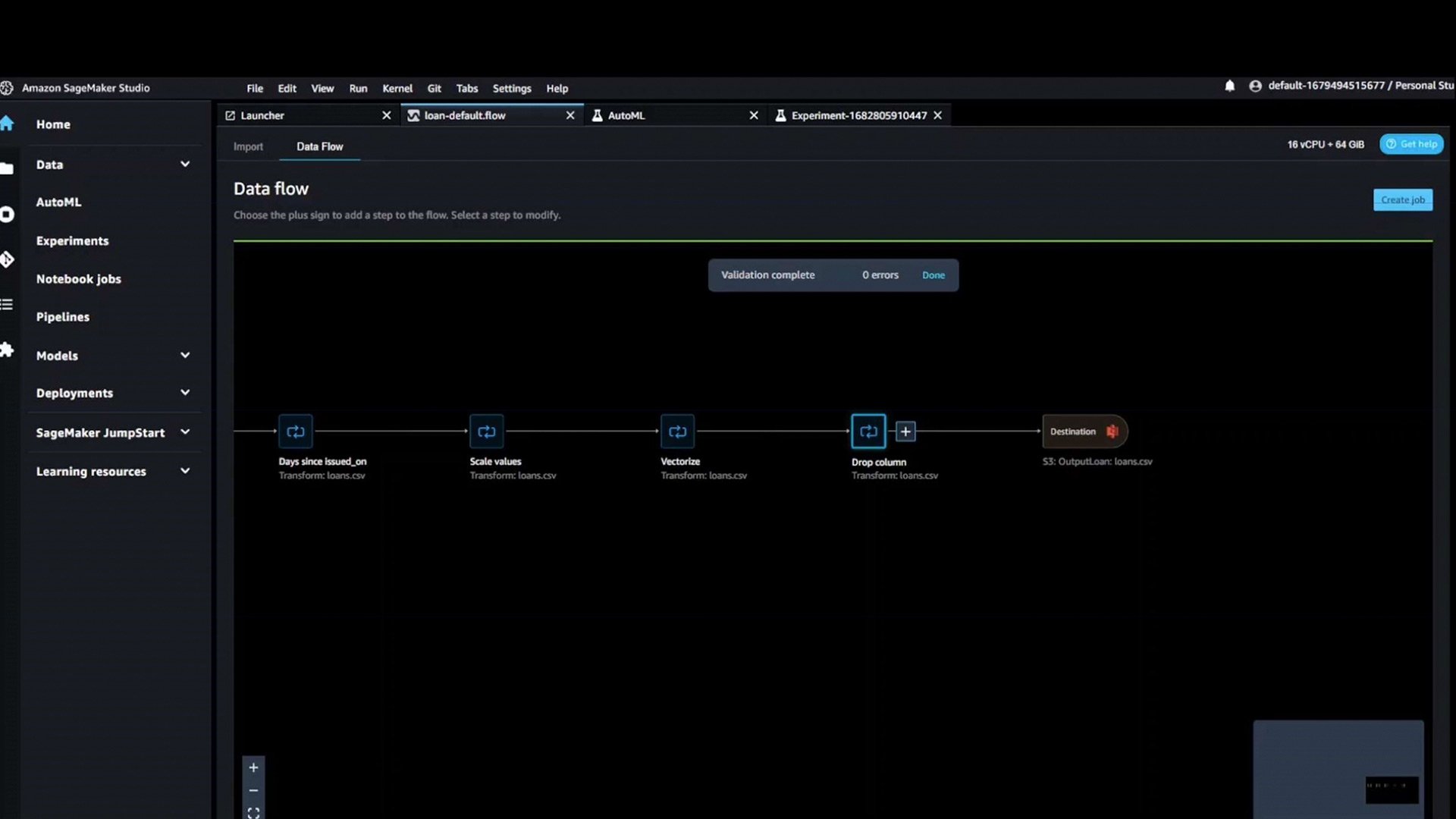

AWS Sagemaker Autopilot helps you build your models, fine tune them and deploy them into production.

- The goal of Low-Code-No-Code tools is to make machine learning practitioners more productive while preserving the flexibility and visibility of the solutions they are building. These tools help accelerate the machine learning workflow by removing the need for writing boilerplate code, automating experimentations, and providing great build capabilities for quicker deployment.

- SageMaker Data Wrangler: This tool helps to quickly prepare your data. It transforms your data with certain columns and certain data types. SageMaker Data Wrangler reduces the time it takes to aggregate and prepare data for machine learning from weeks to minutes. With Data Wrangler, you can simplify the process of data preparation and feature engineering, and complete each step of the data preparation workflow, including data selection, cleansing, exploration, and visualization from a single visual interface.

Generative AI: Powering Innovation

Lastly, the Generative AI session explored the transformative potential of this technology across various fields. From summarizing calls and analyzing sentiment to proactive reasoning and question analysis, the possibilities seem endless. Yina discussed the application of generative AI in business, identifying successful stories in different business cases. The speaker mentioned the use of GPT-3 to build small but accurate inference containers that fit well with AWS Lambda.

Yina Ye and Johan Wadenholt talked about the advantages of using generative AI in the startup context.

Yina Ye, AWS EMEA Lead for startups, differentiated between enterprise versions of Generative AI, like OpenAI, and open-source versions such as GPT-J or GPT-SWI by AI Sweden, offering a broad view of available options. The session also navigated the challenges of applying generative AI in businesses and provided best practices to tackle them. The talk concluded with a discussion on how to work with Generative AI together with AWS, using GPT-3 to build accurate inference containers that align well with AWS Lambda.

Amazon Bedrock is the upcoming foundational generative AI model, it's availability is expected to begin soon.

- The talk also addressed the challenges of applying generative AI in businesses, such as hallucination (subtle errors) and data sharing concerns, especially for B2B startups selling to enterprise companies. The speaker emphasized the importance of understanding the automation process and being able to customize solutions.

- Yina introduced Johan Wadenholt, CEO of Voxo, a Swedish tech company that uses generative AI for conversational intelligence. Founded in 2017, Voxo aims to create smart insights from all types of conversations and currently holds a market-leading position in the Nordics.

- The talk ended with a discussion on how to create insights from conversations and how Voxo uses generative AI now and plans to use it in the future. Johan also mentioned the collaboration between Voxo and AWS in the field of generative AI.

Conclusion

The Summit was a whirlwind of insights into the potential of technology to shape the future. I was left with not only a wealth of knowledge but also a sense of anticipation about the next big innovations in technology. From using technology to solve pressing problems to applying machine learning and AI in groundbreaking ways, the future looks bright indeed. And as we continue to explore and learn, we're reminded that the possibilities are as limitless as our curiosity.

Thank you, AWS, for yet another excellent Summit!

Written by:

Frans Ojala, Lead Cloud Architect at Siili, with the help of generative AI

.png)